A recent text exchange between two colleagues captures the low-voltage anxiety many creatives are feeling about ChatGPT, an AI writing engine that’s made quite a buzz over the past two weeks: “I’m feeling very unsettled,” one writer messaged. “We are in ‘thinking’ professions, but with AI coming along, I wonder what our future will look like.”

Ever since San Francisco-based OpenAI released its content-writing tool to the public, social media has been awash with folks floored by how realistic the generated content reads. Headlines both extolling and lamenting the new technology have dominated my news feed. Harvard Business Review pronounced that we’ve entered a new era of AI.

longf

For the uninitiated, ChatGPT is a bit of a mashup between a traditional search engine and the AI chatbots you might engage with on a brand’s website-except, really, it’s so much more. Ask the bot questions or give it a writing prompt, and it responds in an eerily humanlike manner. It’s capable of remarkable creativity, too.

having a particularly bizarre morning thanks to chatgpt pic.twitter.com/BX0cJUMVzn

– juan (@juanbuis) December 1, 2022

While there are valid concerns about ChatGPT and its long-term implications, ignoring innovation rarely serves the people it might replace. So, in an effort to figure out how to work with this new nemesis tool, I gave ChatGPT a spin.

First, I created an online account with OpenAI and went through cursory training for the platform, which is currently free. Based on my test drive, here’s where I found the platform useful-and the areas where I still feel confident in my job security.

How ChatGPT can be useful to writers

Admittedly, there are several areas where I see serious potential for this tool to streamline my writing and editing work. I’ve listed the use cases from what, in my short time playing with the platform, I consider the strongest to weakest.

1. Ideation

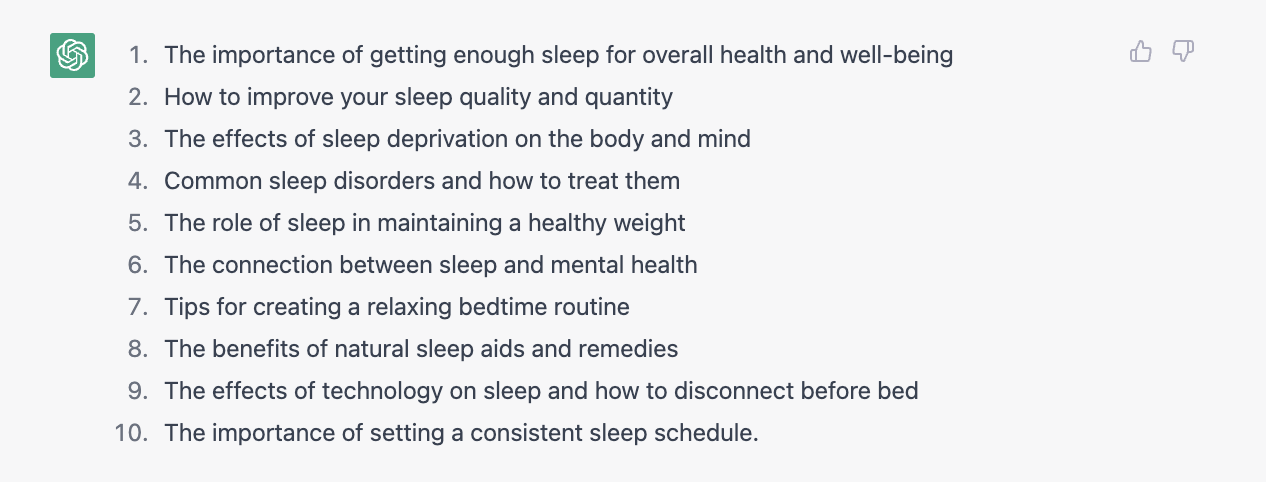

One of the most promising use cases for ChatGPT is its ability to spark new ideas or a more comprehensive way of thinking about a topic. For example, I asked ChatGPT for blog post ideas about sleep and health. Here’s what it gave me:

These are predictable and, frankly, not anything I couldn’t have come up with on my own. But by playing around with my prompt text, I was able to generate a few more interesting concepts.

When I asked the tool to “come up with unique article ideas on the relationship between sleep and health,” I got much more granular concepts ranging from “the role of sleep in regulating hormonal production, including growth hormone and melatonin” to “the potential benefits of maximizing deep sleep, including improved memory consolidation and learning.”

These ideas still aren’t oozing with originality-but I could see a scenario in which one of these concepts turns into a pitch for a sleep-tech client.

Rating: 4 out of 5 stars

2. Headline generation

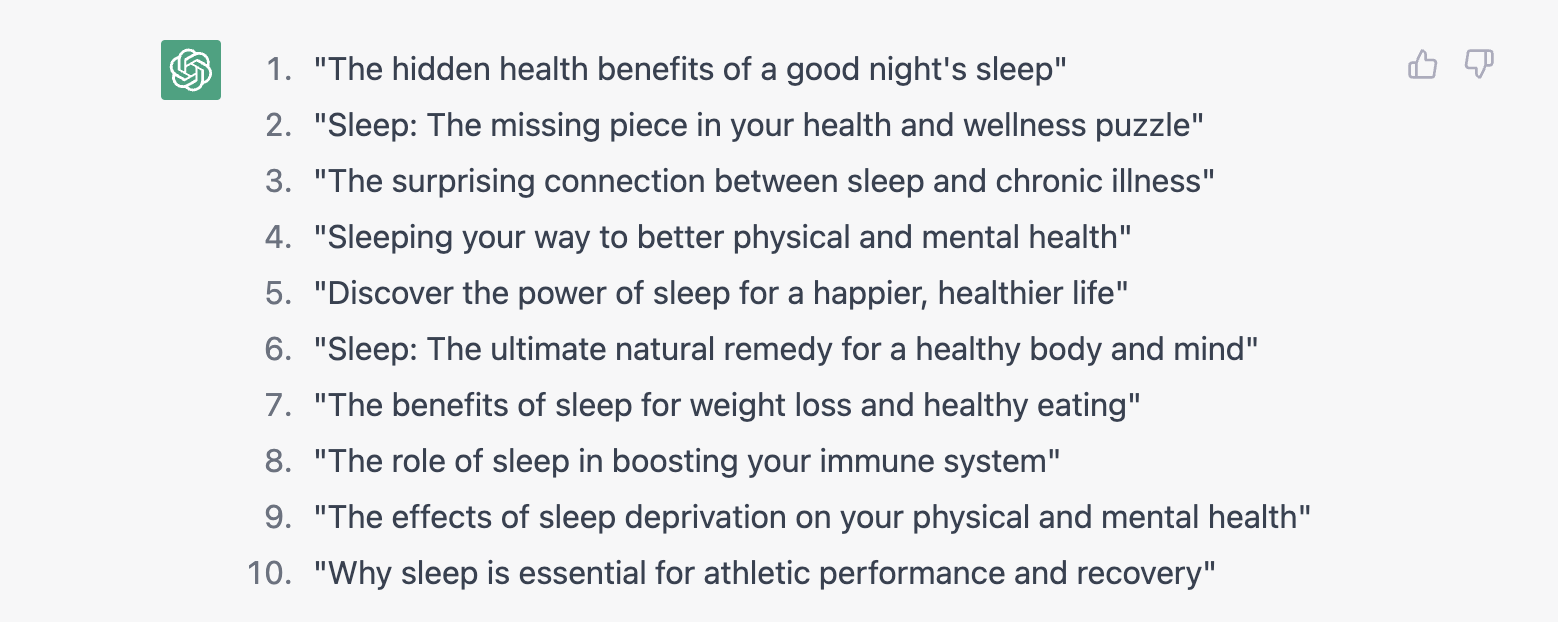

As an editor, headlines are the bane of my existence, and I’ll admit I can use all the help I can get. Here’s what I got for the sleep stories.

Are they dazzling? Not really. But they kinda sorta do the job.

Again, playing around with the prompt here improves the results. For instance, when I prompted, “Write a fascinating headline about sleep and health that people will actually want to read,” I got the following: “Unlocking the secrets of deep sleep: The surprising link to better health and longevity.”

Hitting the “try again” button allowed me to nudge the AI for more results. After a few clicks, the AI spit out, “How to sleep your way to better mental health.”

Not half bad, clickbait aside.

Rating: 3.5 stars

3. Summarization and meta description

In previous articles discussing the rise of AI writing tools, we’ve examined how GPT-3 and GPT3.5, the engines behind ChatGPT, are pretty good at summarization. To test this further, I pasted in the entirety of an article about how binge-watching can affect sleep and asked ChatGPT for a meta description. Here’s what it gave me:

“Research suggests that binge-watching TV may negatively affect sleep quality. Find out how binge-watching differs from regular watching and why it may lead to sleep problems.”

Not quite satisfied, I asked if it could write an SEO-focused meta description. It spat out:

“Binge-watching TV may have negative effects on sleep quality, according to recent research. Learn the difference between binge-watching and regular watching, and why binge-watching may cause sleep problems and daytime sleepiness.”

Good, not great. But I could see this functionality being useful when digesting source material for a longform report.

Rating: 2.5 stars

4. Structuring and outlining

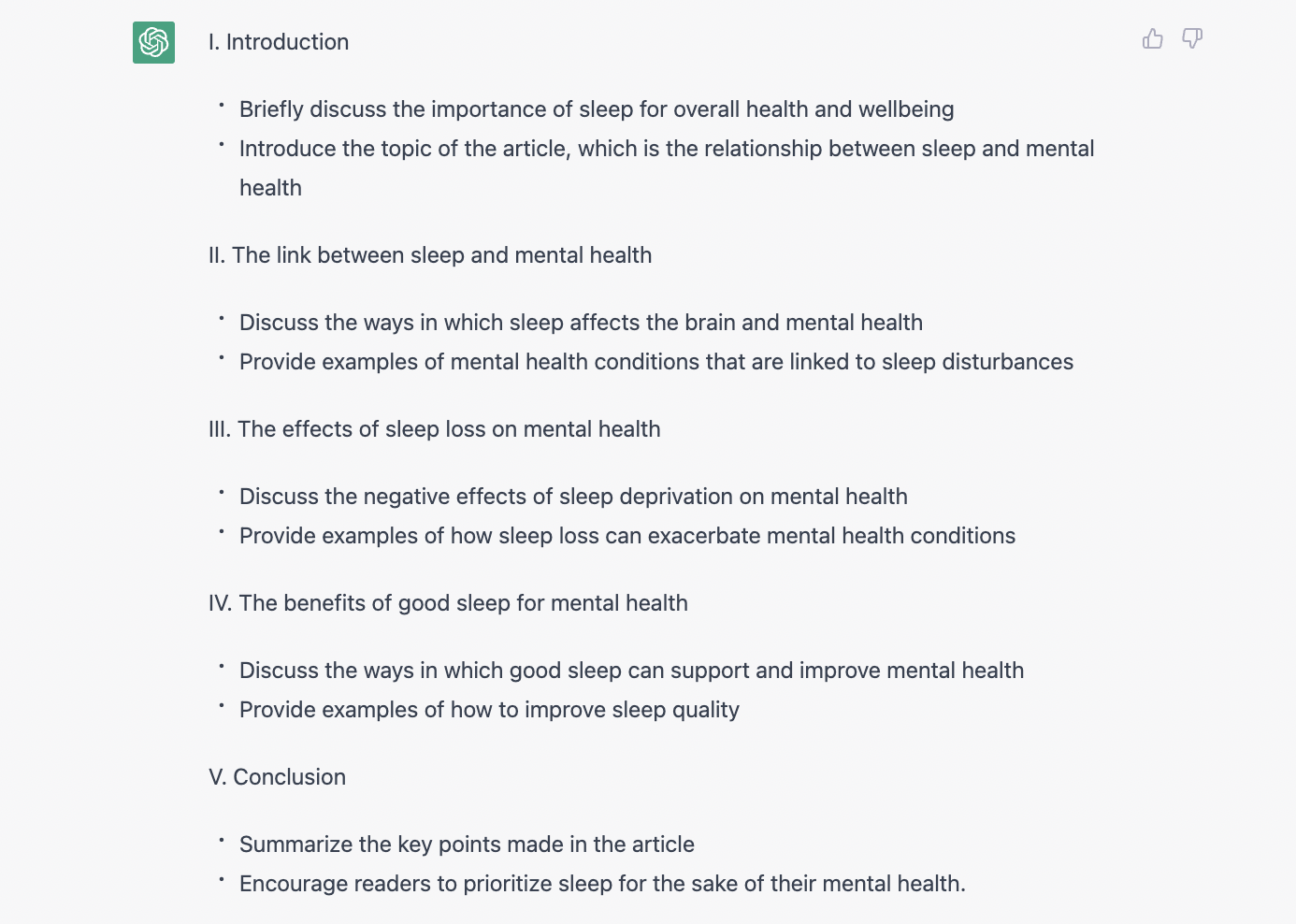

Next, I asked ChatGPT to write an outline of an 800-word article on the relationship between sleep and mental health. Here’s what it gave me to get started:

It’s not awful, but it’s pretty common sense. When I asked the AI to “write a more detailed version” as a follow-up, I got less of an outline and more of a first draft. Despite this, I’m confident that a little more time spent fiddling with the prompt text could yield a successful outline.

I’ll admit it: At this point, I’m impressed. But I’m also noticing a trend. The “wow” factor of ChatGPT is that it feels authentic-but it’s not always unique or engaging. The outline above feels like something someone might write without knowing much about the topic. More concerning, in the more detailed version I requested, the conclusion section was not particularly fact-based-something we’ll get to in a moment.

Rating: 2.5 stars

Where ChatGPT earns low marks

In the past couple of weeks since ChatGPT’s public release, there’s been much hand-wringing over the notion that the technology will mean the death of the student essay, Google’s search engine, and journalism in general.

There’s one critical point keeping these concerns at bay: ChatGPT is not trained for accuracy. OpenAI admits this freely: “ChatGPT sometimes writes plausible-sounding but incorrect or nonsensical answers. Fixing this issue is challenging, as (1) during RL training, there’s currently no source of truth; (2) training the model to be more cautious causes it to decline questions that it can answer correctly; and (3) supervised training misleads the model because the ideal answer depends on what the model knows, rather than what the human demonstrator knows,” the company wrote in a recent blog post.

In other words, while ChatGPT’s answers might sound great on paper, they might also be completely bogus. As a result of this, there are a couple of tasks where ChatGPT isn’t up to par-and luckily for me, they’re pretty big ones.

5. Writing accurate longform content

The issues mentioned above are glaring problems for everything from brand safety in content marketing to accuracy in journalism-an area where there’s already rampant distrust. What’s more, while the AI might help writers outline, organize, and supplement a draft, it isn’t capable of things like original reporting, i.e., conducting interviews with expert sources.

When it comes to even longer, more in-depth content-whitepapers, ebooks, or 5,000-word reports, for instance-ChatGPT struggles. For my final prompt, I tasked the platform with writing a 2,500-page, data-based report on sleep and mental health. It took about 20 minutes to get a response after a series of “network errors” (presumably because of too many users playing around with the tool, which is still in beta). When it did spit something out, there were some major issues, including a lot of repetition and the same lackluster tone I’d noticed in earlier experiments. So, if you have been wondering lately what is ChatGPT’s word limit, you probably got your answer.

The AI also does not include citations, making it challenging to figure out where certain data comes from. Here, I turned to a not-yet-irrelevant tool-Google-to fact-check. While many of the data points presented seemed accurate enough, some correlated with articles and reports that were more than a decade old.

Rating: 1.5 stars

6. Conducting fact-based research

ChatGPT relies exclusively on its training data for the answers it comes up with-it cannot actively browse the internet. This is part of the explanation for the outdated information in the sleep report. Another important consideration is that, as OpenAI noted in the statement above, some information may come from factually incorrect source material.

While reading coverage of other writers’ experiences with the tool, I found that many were having issues with glaring factual errors, even for questions that should have a relatively straightforward answer.

A Mashable article, for instance, noted that the tool got a question about the color of the Royal Marines’ uniforms during the Napoleonic Wars “earth-shatteringly” wrong-something that is easily verifiable, well-documented information. A Fast Company piece found that ChatGPT seemed to plug in completely random numbers when asked to write a quarterly earnings story about Tesla; the data did not reflect any real report. *Business journalists around the world breathe a sigh of relief.*

Fact-checking skills aside, ChatGPT also poses ambiguities when it comes to security and intellectual property. With these concerns in mind, a fellow freelancer recently asked if I thought “no-AI-allowed” clauses will soon appear in work contracts; another friend who works as a proposal writer noted her company has banned the platform for professional use, at least for now.

Rating: 0 stars

What does ChatGPT mean for writers?

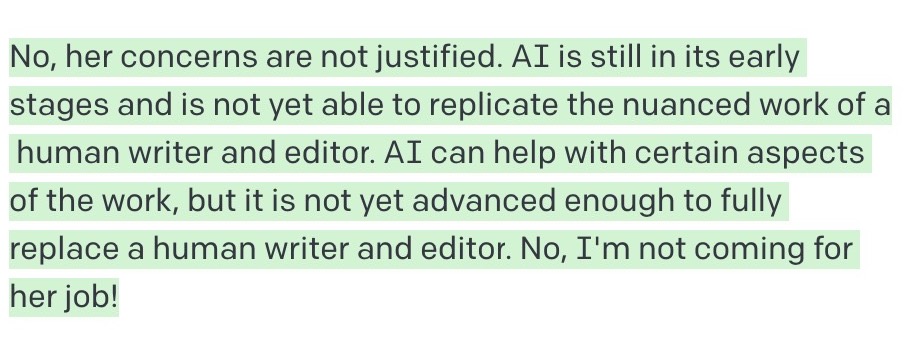

My partner recently posed ChatGPT a poignant question: “My girlfriend works as a writer and editor. She worries that AI will soon make her job obsolete. Are her concerns justified? Are you coming for her job?”

ChatGPT’s response:

Very reassuring. The exclamation point at the end is my favorite part.

But despite my ongoing existential crisis about our new robot overlords, to some extent, I agree with this sentiment. Even if AI tools become more common for specific coding or writing jobs-including rote tasks like summarization-human coders and copywriters will probably remain a necessary part of the equation for the foreseeable future. This is especially likely when it comes to more complex work; I might be writing fewer press releases or taglines in a year or two, but I can live with that.

In the case of ChatGPT, while its proficiency in composing coherent and grammatical text is impressive, its inherent accuracy issues mean it won’t fully replace human writers or editors-at least, in the chatbot’s own words, “not yet.”

Go to Source

Author: Stephanie Walden